What “works on my machine” looked like in Coder

I wanted a self-hosted Convex stack that developers could run inside Coder with Docker and get a reliable loop:

Quick context: Coder provides cloud developer workspaces, and Docker is used here to run the local service stack inside that workspace. Convex is the backend platform in this setup, and the Convex CLI is the command-line tool used to start and manage local development services. “S3-compatible storage” means object storage that aims to match Amazon S3 APIs but can differ in behavior depending on provider.

- start stack

- confirm health

- iterate on app code

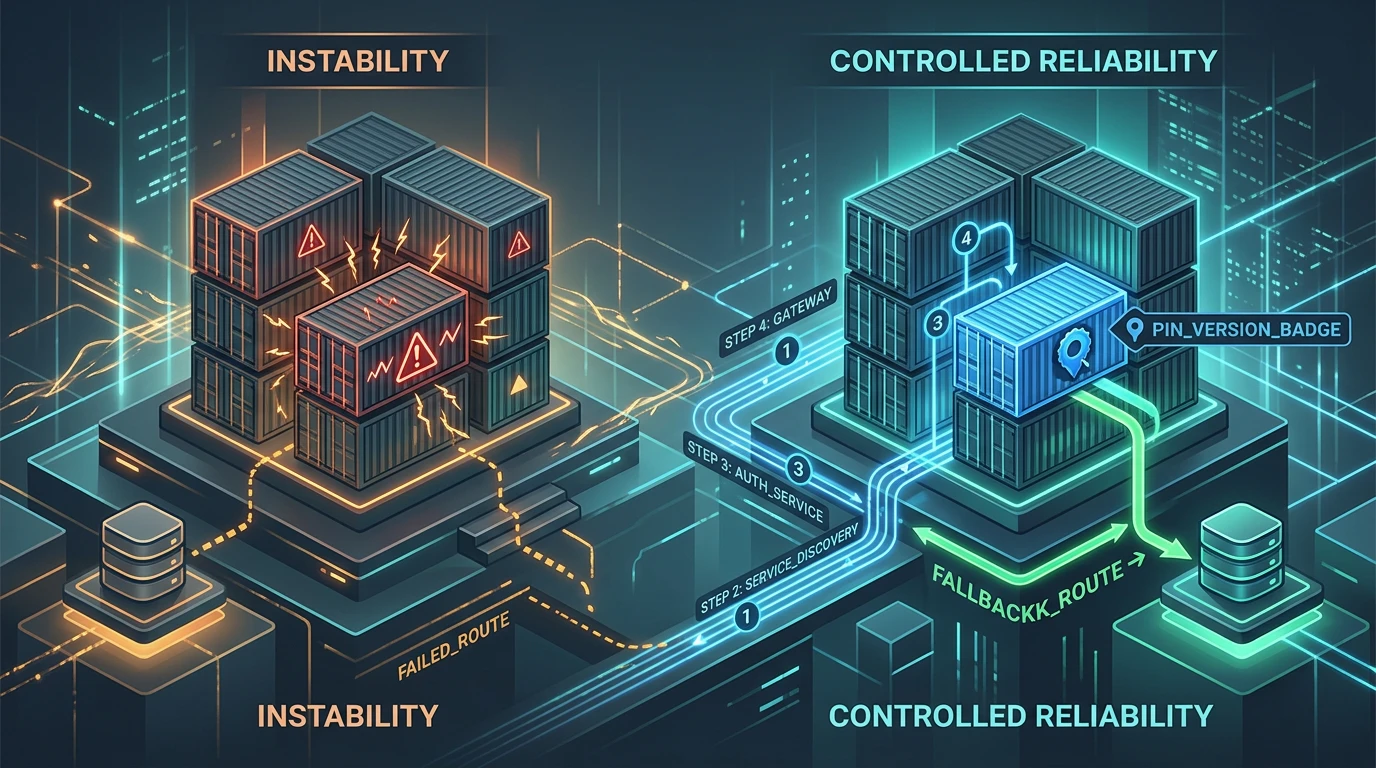

What I actually had at first was intermittent startup success. Some runs came up fine. Others failed for reasons that looked random until I mapped the system dependencies.

The main pain points were:

- Convex CLI version behavior differences

- S3-compatible storage assumptions that did not hold consistently

- container startup order pretending “started” meant “ready”

None of these are exotic problems. Together, they are enough to make a local platform feel unreliable.

CLI version pinning was the first stabilizer

In this setup, Convex CLI 1.26.1 produced issues that broke the expected startup path. Pinning to 1.24.8 gave a known-good baseline.

I do not love pinning by default, but this was the correct move. When a version introduces instability in your critical loop, stability beats purity.

The key was documenting the pin as part of the runtime contract, not as a one-off troubleshooting note. If a new developer can miss that detail, the fix did not really land.

I also treated upgrades as explicit validation work. “Unpin and hope” is not an upgrade strategy.

S3-compatible storage needed reality checks

“S3-compatible” is helpful marketing language, not a guarantee of identical behavior.

I hit edge cases with non-AWS providers that were good enough to block a smooth startup story. Instead of hand-waving that away, I documented what worked and what failed in this specific stack.

Then I added a local storage fallback path.

That fallback mattered for two reasons:

- developers could still run and test core flows when object storage was flaky

- infrastructure debugging stopped blocking product iteration

A fallback is not a substitute for production parity. It is an escape hatch that keeps momentum when external dependencies misbehave.

Startup sequencing had to be explicit

Docker starts containers quickly. Services inside those containers become usable at different times.

My earlier scripts assumed parallel startup plus optimism. That produced race conditions where downstream services tried to connect before upstream services were actually ready.

I changed startup orchestration to enforce dependency order and readiness checks.

The specific mechanism is less important than the rule:

- do not advance just because process exists

- advance when dependency is actually ready

Once this was in place, random startup failures dropped sharply.

What changed for developer experience

Before these fixes, onboarding felt like a puzzle. A new developer had to discover hidden constraints through failure.

After pinning, fallback, and startup sequencing:

- the first-run path became predictable

- troubleshooting steps became shorter and more deterministic

- support requests shifted from “it sometimes fails” to actionable issues

That is the real test for dev platform work. Can someone new run it without guessing?

Tradeoffs I accepted

These choices were pragmatic, not free.

Pinning a CLI version creates maintenance overhead. You need scheduled revalidation to avoid freezing indefinitely on old versions.

Fallback storage can hide environment-specific bugs if teams rely on it too long. I kept it framed as a development reliability path, not the default production behavior.

Explicit sequencing adds script complexity, but I will take readable orchestration over flaky startup every time.

Operational lessons from this pass

A few lessons now feel obvious.

First, treat CLI and tooling versions as part of system design, not incidental dependencies.

Second, build for degraded modes on purpose. External services fail. Your developer loop should not.

Third, make startup health criteria concrete. “Container running” is a weak signal.

Fourth, document constraints where developers actually start the stack, not buried in a postmortem.

What I would harden next

The next step is better automated validation around matrix combinations:

- pinned vs candidate CLI versions

- storage provider mode variations

- startup timing stress tests under constrained resources

I also want periodic upgrade drills so we do not accumulate “surprise debt” when eventually moving off the pinned version.

Finally, I would add clearer runtime diagnostics at startup so common failures point to likely root cause without requiring log archaeology.

Closing take

This work did not involve fancy architecture. It was disciplined reliability engineering:

- pin what is unstable

- provide fallback when dependencies are uncertain

- start services in the order they actually depend on each other

That changed Convex-in-Coder from a fragile demo into a usable development environment.

When teams say they want developer productivity, this is what it looks like in practice. You remove failure paths that waste time, and you make the remaining failure paths easier to understand.