What I wanted from MyCritters

I did not build MyCritters as a demo repo. I built it as a working environment where frontend, backend, and AI/chat behavior could evolve together without constant integration friction.

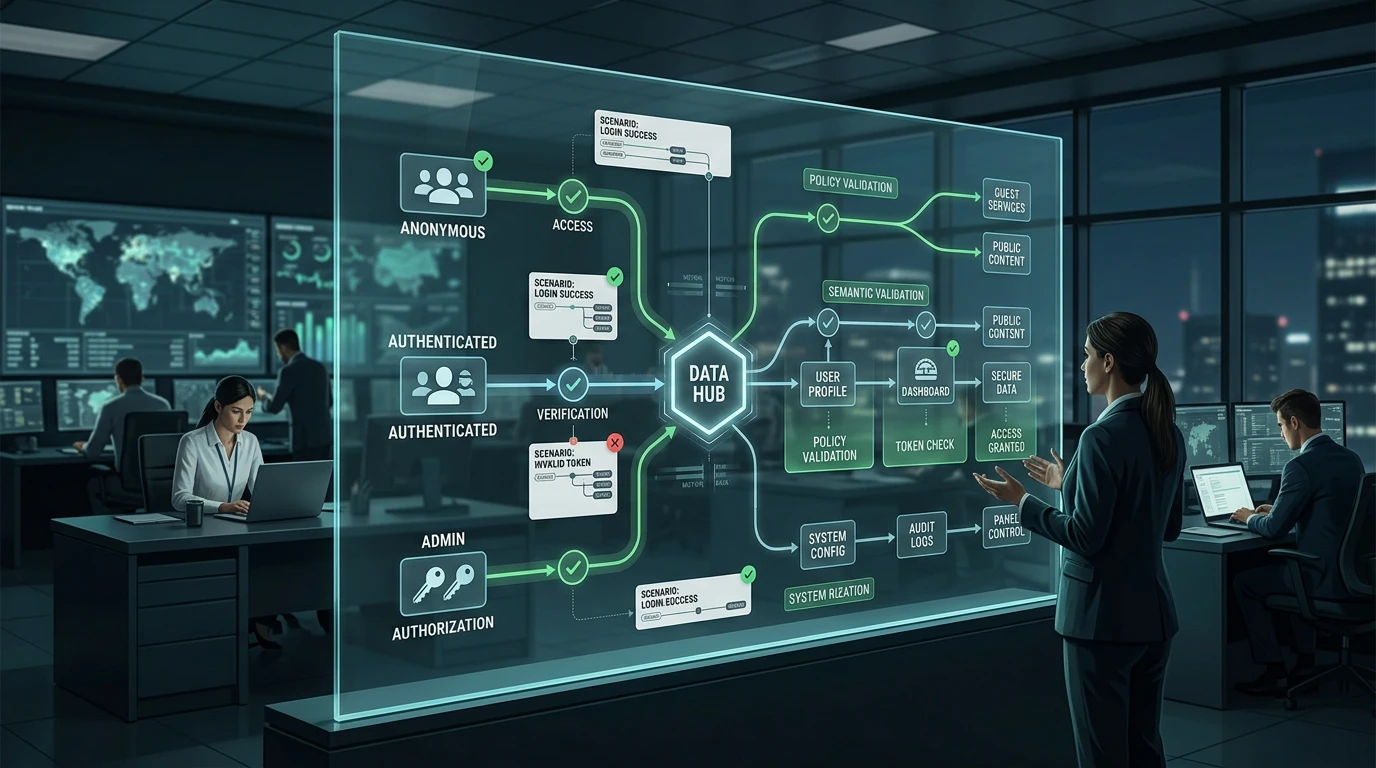

Quick context: Turborepo is a build/task orchestration tool for monorepos, and pnpm is the package manager used for workspace dependency management. Next.js is the web frontend framework. Nhost functions are backend server functions in the Nhost platform. GraphQL is the API query layer clients use to fetch and mutate data. In this article, “role-aware” means anonymous users, authenticated users, and admins each have different allowed behavior by design.

That is why I kept it in one Turborepo + pnpm workspace.

The repo has two main apps:

apps/web(Next.js)apps/backend(Nhost functions with GraphQL)

The product itself is role-sensitive. Anonymous and authenticated users should not see or do the same things. As soon as chat entered the product, role mistakes became the highest-risk bug class.

A response can look perfectly reasonable and still leak something it should not.

Why the monorepo choice mattered

A multi-repo split can work, but for this project it would have slowed the exact changes I made most often:

- GraphQL schema updates

- resolver behavior updates

- frontend query usage updates

- chat test expectation updates

In a monorepo, I can change those together and validate the full chain quickly.

The catch is boundary discipline. Monorepos make bad coupling easier too. I had to enforce package boundaries on purpose and avoid “quick import” shortcuts that become long-term debt.

GraphQL with role-aware expectations

The GraphQL layer is where many permission mistakes hide. You can accidentally expose a field path that works in authenticated flows and then behaves unexpectedly (or dangerously) for anonymous callers.

I made role context explicit in the way I reasoned about the API:

- anonymous contract

- authenticated contract

- admin-only contract where needed

I also documented assumptions in scenario definitions rather than leaving them in my head. This mattered for review quality. A reviewer can challenge a specific expectation. They cannot challenge implicit intuition.

Chat testing: where theory met production reality

Early chat testing was too optimistic. I had tests that passed and still missed meaningful regressions.

The fixes were practical:

- seeded scenarios based on real request patterns

- role-separated runs

- semantic constraints instead of exact response strings

For each scenario, I define:

- role

- prompt

- required concepts

- prohibited concepts

- intent notes

This made failures easier to triage. Instead of “the answer changed,” I could say, “the signed-in user scenario lost required compliance wording” or “the anonymous-user scenario leaked role-specific detail.” That level of specificity changed team conversations for the better.

Tooling and workflow decisions

Turborepo task graphs and pnpm workspace behavior helped keep workflows repeatable. I could run targeted checks for changed areas rather than rerunning everything blindly.

I also treated docs as test infrastructure. Scenario files, role notes, and harness behavior are part of the product contract.

Without those docs, test intent drifts, and eventually the suite still runs but no longer protects the things you care about.

What was harder than expected

Three things took more effort than I predicted.

First, writing strong semantic assertions. Too strict and you get noise. Too loose and you miss regressions.

Second, preventing scenario bloat. Every discovered edge case tempts you to add another test forever.

Third, keeping role rules clear across both GraphQL and chat layers. If those definitions diverge, debugging gets painful fast.

I handled this by prioritizing high-risk scenarios and regularly pruning weak or redundant cases.

What changed after this architecture settled

The biggest change was speed with confidence.

Cross-cutting changes became easier to ship because I could update schema, backend behavior, frontend usage, and role-aware chat expectations in one workflow.

More importantly, I stopped depending on memory for permission-sensitive checks. The harness catches the obvious regressions, and manual review can focus on the nuanced stuff.

This is not perfect coverage, and I do not present it that way. It is a stronger baseline for the failure modes that actually hurt users.

Tradeoffs I still manage

Monorepo leverage comes with active maintenance work.

- boundaries must be enforced continuously

- test infrastructure has to stay aligned with product behavior

- semantic assertions need occasional tuning as prompts and models change

I accept those costs because they are visible and tractable. The alternative is hidden risk spread across disconnected repos and informal QA.

What I would do next

If I keep iterating on this stack, I want two upgrades.

First, longitudinal drift reporting that highlights meaningful response changes by scenario and role over time.

Second, stronger negative testing generated from real bug reports and support issues, not only from developer-designed prompts.

That would improve coverage where products usually get surprised.

Closing note

MyCritters works because architecture and validation are tied together. The monorepo is not the goal by itself. Role-safe product behavior is the goal.

Turborepo + pnpm gave me the structure to move fast. Role-aware GraphQL contracts and a scenario-based chat harness gave me guardrails so “fast” did not turn into “fragile.”

That combination is what made the system useful in daily development rather than impressive only in a diagram.